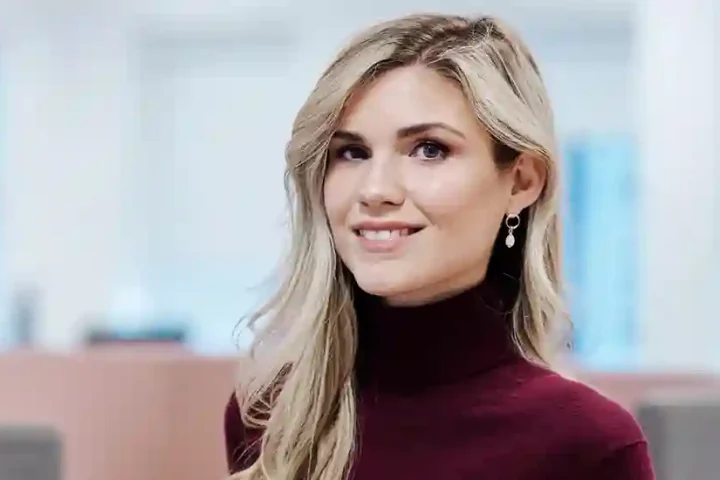

As AI moves beyond digital environments, a new frontier is emerging – Physical AI, where intelligent systems interact directly with the real world. Suliman Gaouda, Regional Vice President, AI – APJ & MEA at IFS, explains how AI is evolving from generating insights to driving real-world outcomes across industrial environments.

1) How do you define Physical AI, and how is it different from software-only or generative AI?

Physical AI is AI that closes the loop with the real world: sense → understand → decide → act → learn under real constraints. It does not just generate answers; it drives outcomes in environments governed by physics, safety, timing, compliance, and imperfect information.

At the World Economic Forum Annual Meeting 2026, Jensen Huang, CEO of NVIDIA, described AI moving toward real-world intelligence that operates within physical environments. That is precisely the space where IFS has operated since its founding in industrial environments.

The key differences from GenAI are:

- Action vs. content: Physical AI produces actions such as robot movement, valve adjustments, or operational decisions.

- Reliability and latency: Errors in industrial systems can have major safety and financial consequences.

- Uncertainty handling: Real environments involve noisy sensors, changing conditions, and edge cases.

- Human-centered operations: The focus is on technicians, operators, and field workers where safety, uptime, and productivity are critical.

This is why we describe our approach as “Industrial AI that matters”—AI embedded where real work happens.

2) Is Physical AI robotics with AI or AI with a physical embodiment?

In industrial reality, it is both. Physical AI combines three components:

- Embodied capability – robots or machines that act in the physical world.

- Operational intelligence – situational awareness of assets and environments.

- Governed autonomy – decisions and actions performed under human supervision.

The real shift is the connection between humans, AI agents, and robots. Many organizations deploy AI agents in digital workflows, but in asset-intensive environments the real opportunity lies in coordinated autonomy—AI agents orchestrating decisions while robotics execute them.

At IFS, solutions like Operational Intelligence create a real-time operational view, Digital Workers automate workflows, and copilots support frontline workers. Partnerships with companies such as Microsoft, Siemens, Anthropic, and Boston Dynamics help accelerate this ecosystem.

Ultimately, robots will act as industrial actuators, but the real value comes from the system connecting intent → planning → execution → learning across operations.

3) What are the biggest technical challenges in moving AI into physical environments?

The hardest part is not making AI intelligent in controlled environments—it is making it safe, reliable, and robust in the real world.

A major challenge is the “reality gap” between simulation and real environments. Models trained in simulations often struggle when faced with noisy sensors, unpredictable conditions, or complex physical dynamics.

Key challenges include:

- Environmental variability and sensor noise

- Real-time performance requirements

- Safety and verification

- Data limitations and fragmented systems

- Human interaction and trust

- Integration across operational processes

Industrial organizations do not need AI that works only in demos—they need systems that survive contact with reality. That is why our focus is on governed autonomy, workflow-driven execution, and field-based co-innovation with customers.

4) How important are simulation, digital twins, and synthetic data?

They are essential because the physical world is expensive, slow, and risky for experimentation.

The correct framework is:

- Simulation – the training ground

- Digital twins – the operational mirror

- Synthetic data – coverage for rare events and edge cases

In industrial environments, digital twins enable organizations to simulate operations, detect anomalies, forecast outcomes, and trigger real-world actions.

At IFS, Operational Intelligence effectively creates a real-time digital twin of operations, connecting sensors, analytics, and service actions.

Physical AI simply cannot scale without these safe testing environments.

5) Which industries will feel the impact first?

The earliest impact is already visible where automation ROI is clear and environments are structured.

Manufacturing leads the way. The International Federation of Robotics reports over 4.28 million industrial robots operating globally.

Logistics and warehousing are also advancing rapidly due to labor shortages and demand for speed.

Next will be energy, utilities, and defense, where uptime and safety are critical.

Healthcare adoption will grow but more cautiously due to regulation and safety requirements.

Smart cities will benefit through infrastructure systems like utilities, fleets, and traffic management.

Overall, the greatest impact will occur in industrial value chains—plants, fleets, warehouses, and field operations—where AI directly improves uptime, throughput, safety, and service.

6) How does Physical AI change traditional automation strategies?

Traditional automation tends to be deterministic, pre-programmed, and siloed. Physical AI shifts this model in three ways:

- Programmed automation → adaptive autonomy

Systems can respond to changing conditions using AI perception and learning. - Robot cell ROI → end-to-end operational ROI

The greatest value emerges when robotics connects with planning, maintenance, inventory, and service workflows. - Point solutions → integrated orchestration

AI, agents, and automation must operate within a unified operational platform.

At IFS, copilots and digital workers are embedded directly into enterprise systems that manage assets, service operations, and supply chains.

7) What role do edge computing and real-time inference play?

They are critical for Physical AI.

Many industrial decisions must happen in milliseconds, making cloud-only processing impractical. Edge computing enables faster responses, resilience in low-connectivity environments, and better data control.

Industry trends reinforce this shift. International Data Corporation estimates global edge computing spending will exceed $261 billion, while connected IoT devices continue to grow rapidly.

The broader convergence is AI + robotics + edge computing, with quantum technologies potentially accelerating optimization and simulation in the future.

8) How will Physical AI redefine human–machine collaboration?

The future is not humans versus machines, but humans working with machines.

Discussions at the World Economic Forum emphasize that AI will reshape work by augmenting human capabilities rather than simply replacing them.

In industrial settings, we see three complementary roles:

- Copilots help workers make faster decisions.

- Digital workers automate repetitive workflows.

- Robots handle heavy or hazardous physical tasks.

The goal is to make frontline workers safer, more productive, and more capable.

9) Will Physical AI augment humans or replace them?

Both will occur, but most industrial value will come from augmentation and operational redesign, not pure replacement.

Many industries already face labor shortages and experience gaps, so AI helps smaller workforces operate more effectively.

Automation will remove routine administrative tasks and some repetitive physical activities, but humans will continue to supervise systems and retain accountability.

The enduring model is clear: humans oversee, AI expands capability, and robots handle risk and repetition -all within governed workflows where autonomy operates safely.